The mid-1970s were a genuinely fun time to be a kid, at least when it came to machines and games. We played Pong on Zenith TVs in the family room, graduated to Atari 2600s and Intellivisions on Sony Trinitrons. The television was becoming a screen you could interact with. Something was shifting, even if none of us had words for it yet.

School was a different story. In my small Northeast Ohio town, our middle school had, if I remember correctly, a single Apple II kept in a closet, available for limited use, rationed by the science teacher. What we actually unlimited had access to, in the library, were Atari 800s. Those were the first machines I really goofed around on, mostly trying to build simple games in BASIC. The Apple II was for real stuff, stuff that mattered. The distinction was already visible to us as kids: one machine was for playing, the other was for doing. The Apple had an aura of purposefulness that the Atari, for all its charms, never quite achieved.

By high school in the early 1980s, the Apple II had become a de facto standard, though we still typed our term papers on typewriters and handed them in on paper. The shift toward compute was visible, but it hadn’t arrived fully. The computer was something you visited, not something you were immersed in. What I couldn’t have articulated then, but can see clearly now looking back, is that I was watching a translation layer being built in real time. In particular, Apple wasn’t just selling machines. It was teaching institutions, and a generation, what machines were for.

I wandered away from Apple for a long time after that. The world of DEC-VAX terminals, Stratus mini-computers, had their own austere logic. College computing was serious and impersonal. You learned to speak to the machine in its own language, or you didn’t get results. But Apple was always somewhere in the room. In the journalism lab. In the design program. On the desk of anyone trying to make something look like it had been made on purpose. Apple ruled the creative side because Apple was beginning to understand something the rest of the industry was still oblivious to: that the machine’s job is to serve human intention, not the other way around.

That understanding is what I want to explore here on Apple’s 50th Anniversary. Not the products, not the legendary biography of Steve Jobs, not the design language or the retail stores or the trillion-dollar valuation. Those stories are being told very well elsewhere. David Pogue’s exhaustive new history, Apple: The First 50 Years, covers that ground with interviews from 150 people who were inside the story. What I want to talk about is something that underlies all of it and rarely gets named directly: the idea that Apple has functioned, throughout its existence, as a cultural operating system.

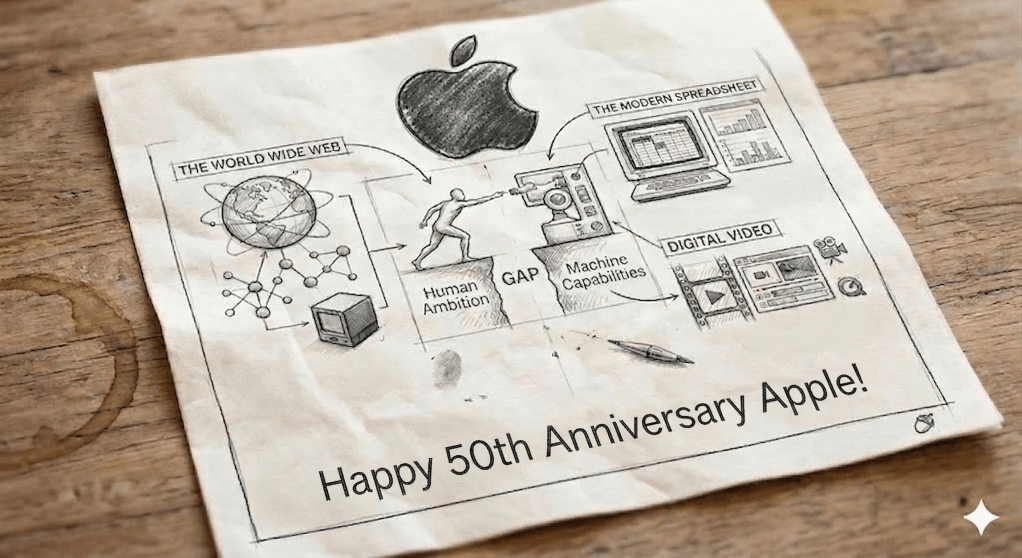

What if closing the gap between what humans can imagine and what machines can actually do wasn’t Apple’s founding mission, but became, through decades of compounding decisions, the thing Apple turned out to be for?

Defining a cultural operating system

An operating system, in the general sense, is a translation layer. It sits between raw hardware (processors, memory, and storage) and the human beings who want to do things. Without it, you would need to understand machine instructions at a level no ordinary person thinks in. The OS abstracts that complexity away. Enabled by software it creates a coherent, legible surface where human intention can be expressed and executed.

Jobs famously articulated Apple’s mission as operating at “the intersection of the liberal arts and technology.” That phrase has become so repeated that it risks losing its power. But it means something precise: the liberal arts, by which I mean the study of how human beings think, feel, reason, create, and communicate, should be as central to the design of a technology product as the engineering underneath it. You need to understand people as well as you understand circuits. You need to build for the full human, not just for the technically literate fraction.

The result, when Apple gets it right, is a company that functions as a translation layer not just between fingers and transistors, but between human creative ambition and technological possibility. It makes the machine legible to human intent. It makes human intent legible to the machine. That is the function of an operating system, and Apple has been doing it, at a civilizational scale, for fifty years.

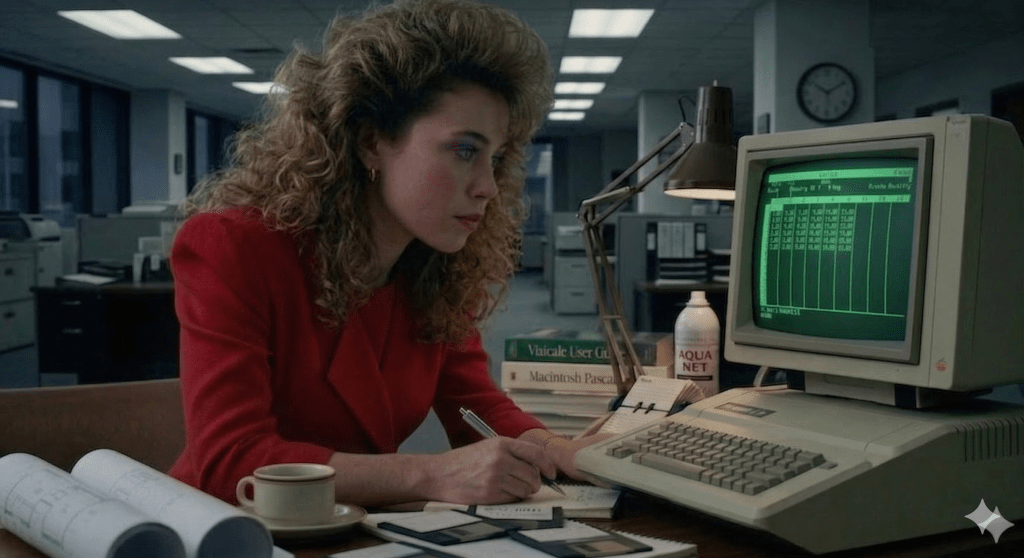

The spreadsheet and the genesis of modern business

To understand how early and how deep this pattern runs, start in 1979 when Apple Computers were a novelty at best. Dan Bricklin was a Harvard student, who watched a professor laboriously revise financial projections on a blackboard: erasing cells, recalculating by hand, propagating changes one at a time through a tableau that had to be rebuilt from scratch every time an assumption shifted. Bricklin saw something the professor couldn’t. This was a translation problem. Human financial reasoning naturally worked in interconnected grids of relationships. Machines could model that. The gap was a piece of software that didn’t yet exist.

VisiCalc, released in 1979 for the Apple II, was that software. It is widely credited with transforming the microcomputer from a pastime for computer enthusiasts into a serious business tool, and the transformation was immediate and profound. Steve Wozniak said that small businesses, not the hobbyists he and Steve Jobs had expected, purchased 90% of Apple IIs. Apple dealers said that VisiCalc had caused more business computer sales than all other software combined. Jobs himself later reflected that VisiCalc “propelled the Apple II to the success it achieved more than any other single event.”

What VisiCalc actually did was translate a form of human reasoning, specifically the scenario-planning, assumption-testing, what-if logic of financial analysis, into something a machine could execute at speed. Changing any single cell would cascade through the entire sheet automatically. This could turn 20 hours of work into 15 minutes. That is not merely a productivity gain. It is a cognitive transformation: a new way of thinking about business logic that only became possible when the translation layer existed.

The downstream value has been incalculable. Every financial model, every budget, every business plan built in the decades since rests on the conceptual architecture Bricklin laid down on an Apple II. The entire practice of modern management, its quantitative culture, its scenario-planning ethos, its comfort with rapid iteration of assumptions: all of it was shaped by what a piece of software running on an Apple machine made thinkable. [Excellent post on the impact of the spreadsheet].

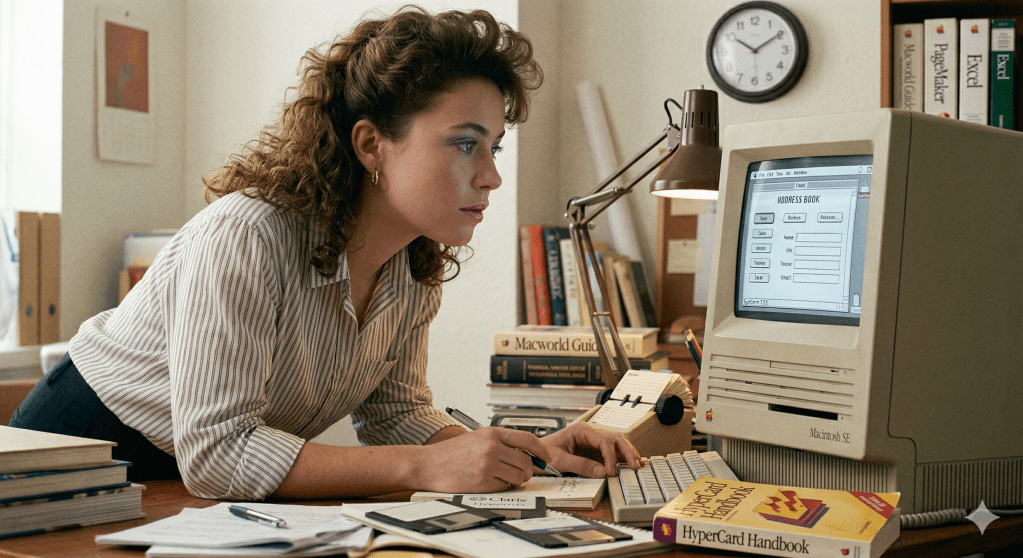

HyperCard, NeXT, and the web that almost wasn’t

Eight years after VisiCalc, a different translation was happening inside Apple itself. Bill Atkinson, the programmer who had built MacPaint and who understood more viscerally than almost anyone what it meant to make a computer feel like a creative tool, was working on something he described as a “software erector set.” HyperCard was released on August 11, 1987, for the first day of the MacWorld Conference and Expo in Boston, with the understanding that Atkinson would give HyperCard to Apple only if the company promised to release it for free on all Macs.

HyperCard was a system for linking information: cards connected to cards, ideas linked to ideas, with a scripting language called HyperTalk that let non-programmers build interactive applications. Atkinson described it as “programming for the rest of us.” He later lamented that if he had only realized the power of network-oriented stacks instead of focusing on local stacks on a single machine, HyperCard could have become the first web browser. It didn’t. But something it inspired did.

At CERN in the late 1980s, a Belgian systems engineer named Robert Cailliau was looking for an internal documentation tool. He was evaluating Apple’s HyperCard as a potential system for CERN when he encountered Tim Berners-Lee’s proposal for a networked information system. He immediately recognized its potential. The collision of HyperCard’s conceptual model with Berners-Lee’s networked hypertext idea, implemented on a NeXT workstation (itself a machine that had left the Apple tree when Jobs was exiled), produced the World Wide Web.

By December 1990, Berners-Lee and his team had built all the tools necessary for a working web: the HyperText Transfer Protocol (HTTP), the HyperText Markup Language (HTML), the first web browser, the first web server, and the first website. The code for the web server was developed on a NeXT computer. Berners-Lee made his invention available freely, with no patent and no royalties. In a panel of 25 eminent scientists, academics, writers and world leaders asked to identify the cultural moments that most shaped the modern world, the invention of the World Wide Web was ranked number one.

The lineage is direct. Apple’s commitment to making computers accessible to non-programmers produced HyperCard. HyperCard gave Cailliau a mental model. Cailliau helped Berners-Lee refine and champion his proposal. Berners-Lee built it on a NeXT, which was Steve Jobs’s post-Apple company, built on the same philosophical concepts. NeXT, as we know, would be acquired by Apple, and bring with it the underpinnings of the modern MacOS. The modern web, and the trillions of dollars of value it has generated, traces back to Apple’s foundational conviction that human beings who aren’t engineers deserve access to the power of linked information.

QuickTime and the grammar of digital media

The pattern appears again in 1991, with QuickTime. Before QuickTime, digital video was a research curiosity. Moving images on a personal computer required specialized, expensive hardware. It wasn’t something ordinary people or ordinary machines could do. QuickTime was Apple’s attempt to change that, to make time-based media a first-class citizen for the personal computing environment.

The implications unfolded slowly and then all at once. QuickTime established the conceptual and technical grammar that digital media would follow: the idea that video, audio, animation, and interactive elements could live inside a unified container that a computer could play, scrub, edit, and share. That grammar became the foundation on which the entire digital entertainment, education, and media industry was built. Every streaming service, every video call, every podcast, every online course descends from the framework Apple was building in the early 1990s: the conviction that the computer should be a window onto human expression in all its time-based richness.

The pattern behind the products

What connects VisiCalc, HyperCard, QuickTime, the LaserWriter, desktop publishing, the iPod, the iPhone, and everything else in Apple’s history is a consistent underlying move: identify a form of human expression or reasoning that existing technology cannot yet support, and build the translation layer that makes it possible. The products are the expression of that move. The move itself is the company.

This is why Apple’s influence has always exceeded its market share at any given moment. A cultural operating system doesn’t need to be running on every device to shape how everyone thinks about what devices are for. Apple seeded the computing imagination of multiple generations of designers, educators, artists, journalists, and entrepreneurs. The people who built the businesses and tools of the digital economy learned what a computer could feel like on an Apple machine. They carried that expectation into everything else they built.

The most durable thing Apple ever shipped wasn’t hardware. It was a set of expectations about what the relationship between human beings and machines should feel like.

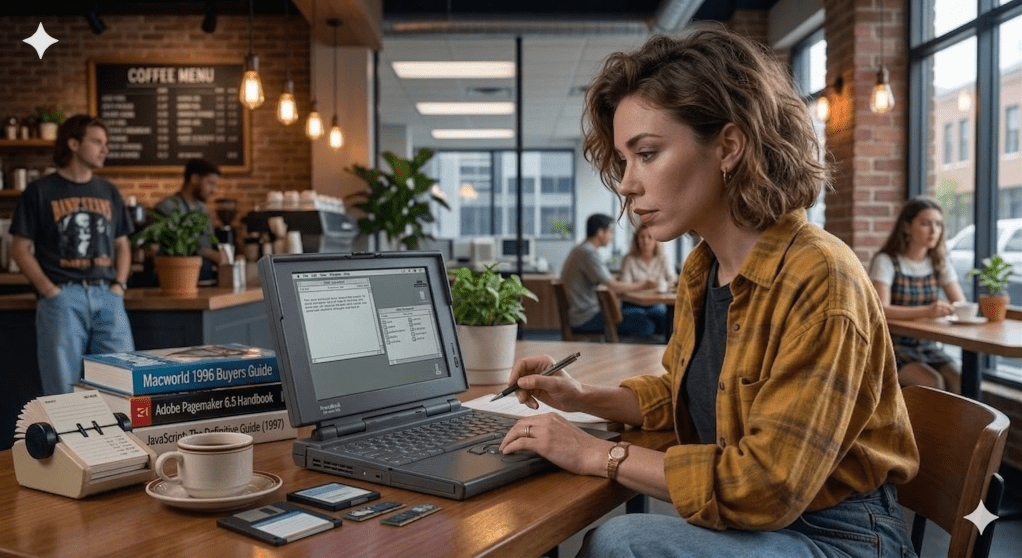

On AI: behind, or in waiting?

The current narrative about Apple and artificial intelligence is that the company is behind. Siri lags ChatGPT. Apple Intelligence arrived late. The flashiest AI demos are coming from OpenAI, Google, and Anthropic, not from Cupertino. If you measure by headlines and chatbot benchmarks, the narrative holds.

But there’s a different way to read the situation, one that fits Apple’s historical pattern far better than “falling behind” does.

AI, as it currently exists, has not yet risen to the strata of a cultural operating system. The dominant AI products are impressive demonstrations of capability: they can write, reason, code, and converse at levels that feel genuinely new. But they are largely cloud-dependent, privacy-indifferent, and optimized for raw performance rather than the seamless translation of human intent. They are powerful tools in search of a human-centered interface layer. They are, in other words, exactly where computing was before the Macintosh: technically extraordinary, but not yet organized around the human being on the other side of the screen.

It’s also likely true that Apple has not, as far as anyone can tell from the outside, looked at this landscape and said: “We will build the cultural OS layer for AI.” What appears to have happened is something more organic. A company that has spent decades betting on privacy, on vertical integration, on per-watt performance, on making intelligence feel native rather than bolted on, finds itself somewhat accidentally holding most of the right cards for the next translation moment.

The privacy stance, long treated as a philosophical constraint, quietly pushed Apple toward on-device processing long before edge AI became the industry’s preoccupation. The silicon investments, specifically Neural Engines baked into every chip in the lineup and the M-series architecture designed around unified memory bandwidth, were likely made for reasons that had nothing to do with anticipating the current AI wave. But, they were made because Apple builds its own stack, and building your own stack means your hardware can be optimized for your software in ways no assembly of third-party components ever achieves. The M5, announced in late 2025, delivers over 4x the peak GPU compute performance for AI compared with M4. Not because Apple planned to win the AI race, but because Apple kept building the way Apple builds.

There is something almost Darwinian about this. Institutional memory, accumulated through decades of decisions made for reasons that seemed local and specific at the time, can produce an organism that is oddly well-adapted to an environment that didn’t exist when the adaptations were being made. Apple’s vertical integration wasn’t designed for edge AI. Apple’s privacy architecture wasn’t designed for edge AI. Apple’s obsession with per-watt performance wasn’t designed for edge AI. And yet: if you wanted to describe the company best positioned to make AI feel the way the Macintosh felt, intimate, personal, present without being intrusive, capable without demanding that you understand its internals, that description fits something that looks a great deal like the company Apple has been becoming all along.

Apple is not chasing a bigger brain. It may be, without fully knowing it yet, building toward a world where useful intelligence is ambient, personal, private, offline-capable, and mostly paid for in advance by the device you already own. If that world arrives, Apple’s greatest AI achievement won’t be a chatbot moment. It will be something quieter and more durable, and it will feel, in retrospect, inevitable. As Apple’s best moves always do.

The next translation moment

The history of Apple is a history of translation moments, inflection points where a new form of human capacity found its interface layer, and the world reorganized around what became newly possible. The spreadsheet translated financial reasoning. HyperCard translated linked thinking. QuickTime translated time-based expression. The iPhone translated mobility and embodied experience. Each time, Apple’s contribution was not the raw capability but the layer that made the capability feel continuous with human lifestyles and ambitions.

AI will have its own translation moment. It will be the moment when ambient intelligence stops feeling like a separate application you invoke and starts feeling like an extension of your own cognition: present, private, contextual, available without friction. That moment requires exactly what Apple knows how to do. Vertical integration, human-centered design, a willingness to subordinate capability to usability, and fifty years of accumulated institutional knowledge about what people actually want from their machines.

The company that was born in 1976 with the explicitly stated goal of bringing the power of computing to everyone has never been in the business of winning the arms race. It has been in the business of making the arms race irrelevant. Of waiting until the translation layer is ready, and then making the whole thing feel inevitable.

The intersection of liberal arts and technology was never a slogan. It was a description of the only kind of company that can do what needs to be done next, whether it knows it yet or not.

I think back to our middle school days, when computing was just beginning to arrive, rationed and uncertain, and none of us could see the full shape of what was assembling around us. We were standing at the edge of something. Apple’s long arc was only beginning to come into view, though none of us could have seen it. Now, half a century on, the kids in those classrooms already have Apple devices in their hands. They carry the tools of the next translation moment in their pockets, on their wrists, pressed against their ears. They are waiting, as every generation before them has waited, for the spark that will make the possible feel obvious. The Age of AI is still Apple’s to define.

Leave a comment